VDMbee introduces its Agile DevOps (DORA) audit. It helps organizations to improve their performance of software development. On the occasion of the introduction of this service, we will publish a series of four blogs. These blogs will highlight the context, foundations and approach of this service. This blog is the second of four. It focuses on flow in Agile DevOps, and even beyond that. It argues how “flow”, in software development, enables agile value delivery measurement and improvement. And also how it increases the value that is delivered.

Challenge

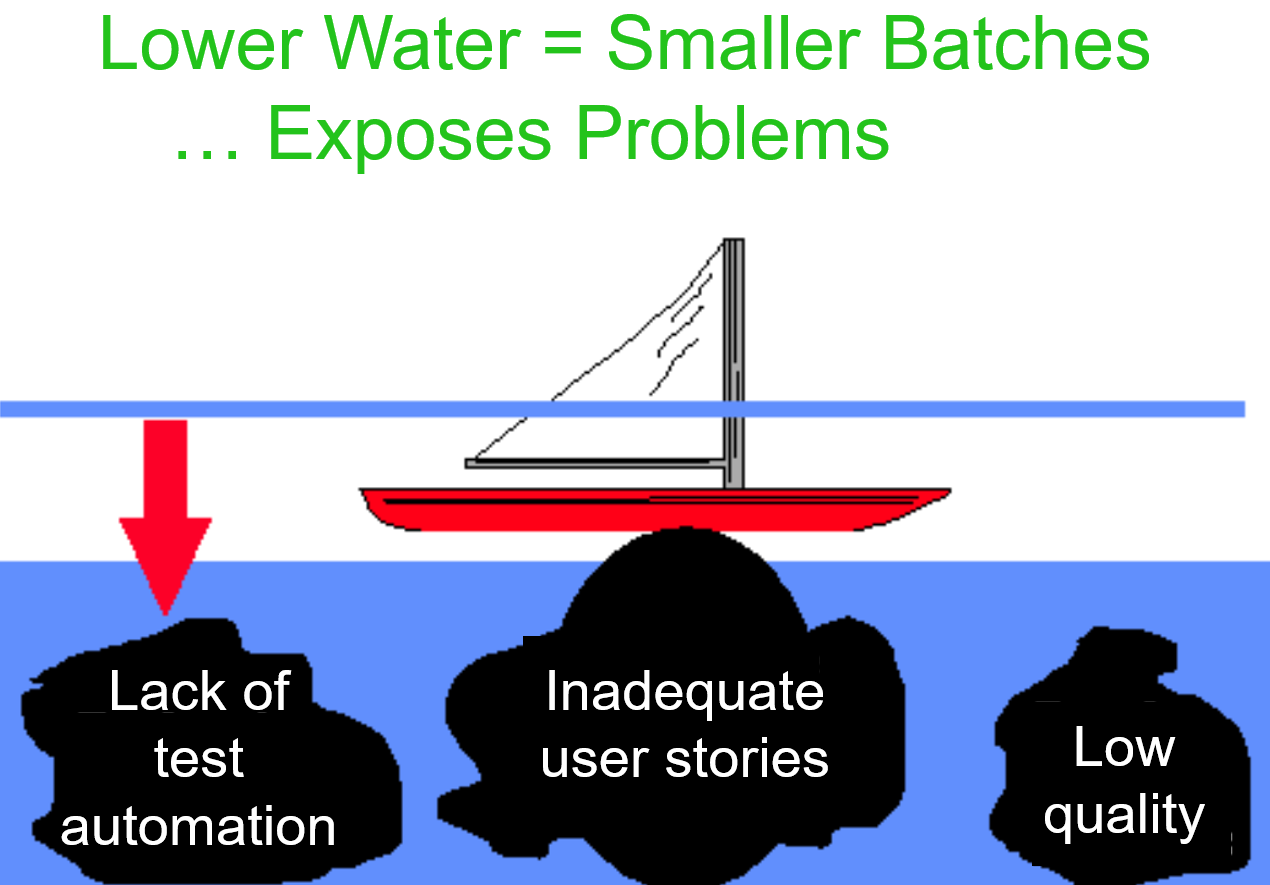

Traditionally, new releases of a software product are deployed, in production, at low frequency. For instance, every eight weeks. Mainly due to a lack of test automation. Manual regression tests are very labor intensive. They can’t be scheduled per individual change (i.e., task of a user story, or bug fix). But there are many more factors that cause a low pace of deployment. Low quality and high complexity of the software require substantial rework when issues are detected. User stories may be large and strongly dependent on each other. Developers may have to synchronize and merge too many branches. Reviewers conduct reviews with significant delays. Feature management (“feature toggles”) may be largely missing. These factors may just be the tip of the iceberg of underlying problems. Together, they cause this need of low deployment frequency.

This is clearly something to improve. But how ? In this blog we argue how transition to “flow” gives us wind in the sails to overcome these problems in a manageable way.

Flow in software development

As long as these and other problems exist, the only way to feel “comfortable”, is to deploy large batches of changes. These changes are then the result of implementing tasks and fixing bugs during a period of, say, eight weeks or more.

We can apply the “water and rocks analogy” of Lean Thinking here. The water level represents batch sizes. As long as batches are large, boats sail smoothly. But as soon as one tries to reduce batch sizes, boats hit the rocks! Rocks denote the underlying problems as mentioned above. Problem elimination enables lowering the water level further, achieving deployment of small batches of changes. This way we achieve “flow”: development and deployment in small batches.

The ideal is one-piece-flow: the developer implements a change, passes it on for review and starts implementing the next change. The reviewer, as soon as a change is passed on for review, reviews it, passes it on for merge and picks the next change for review. And so will steps for building, test and deployment be performed.

“Flow” denotes a way of working, whereby:

- minimal batches are developed and deployed

- batches just move on, from step to step in the process, rather than being scheduled

- there are no delays and queues between steps

this also implies that work is leveled (balanced): work content per step is such that no piles of work are created between the steps.

Flow as prerequisite

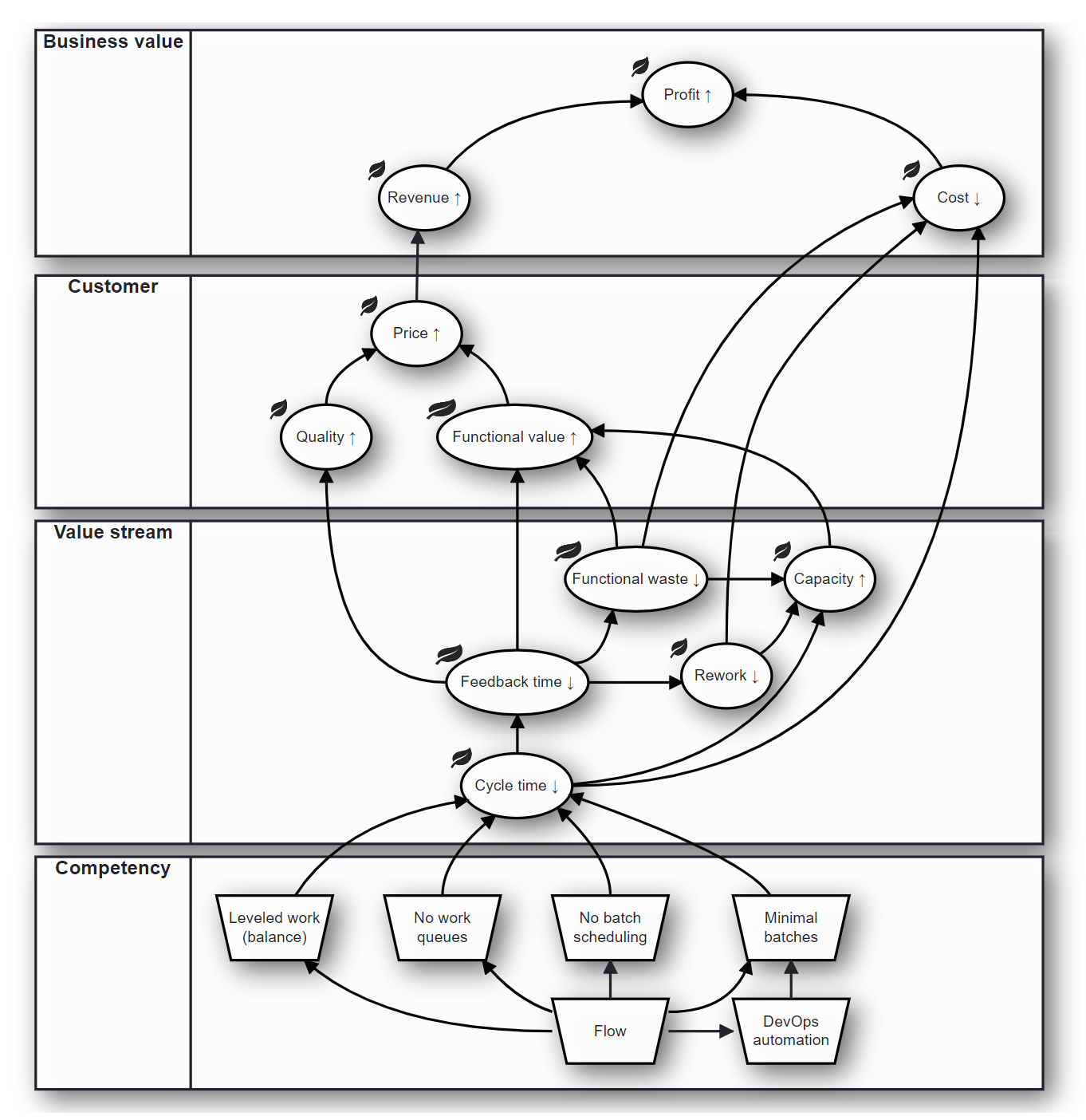

With “flow”, as described above, we achieve that we work with the minimum possible cycle time. Cycle time denotes the duration of time from starting work on a change, until the result of that change reaches the customer, i.e., that it is deployed in production.

Of course, it depends where this “flow” process is started: is it at the start of DevOps, say, after code merge ? Or is it at the start of development of a change ? Or even earlier, into the realm of the Product Owner ? Or even of the Product Manager ? We will defer the discussion about this to a later blog. But the essence is clear: “flow”, to the extent that we adopt it, implies “shortest possible time to customer”.

“Flow” gives you a way of working that you can very well automate. In DevOps, nowadays, tooling to “automate” the process is quite common. But, as long as you work in large batches of changes, application of this tooling alone, will not result in true automation. “Flow” is needed to make automation happen.

Deliver more value through Flow

“Shortest possible time to customer” also implies the shortest possible time to get feedback on your work, from the customer! And this, combined with the idea that you work in smallest possible batches (“one-piece-flow”), is a big gain creator for you.

Because, this “fastest possible feedback” will do the following for you:

- Rework time is minimal. Because it is immediately clear to you now, what went wrong, and where you have to look for a fix. Because it was just about this only one change that went out of your hands, just a very short while ago.

- Might you have implemented something that the customer was not waiting for, you will hear that almost immediately. You can no longer get off track far, by implementing “functional waste”.

- For the same reason, quality of your product is higher. You will find out almost immediately, what you did wrong, with the relatively small and only one change that you just implemented.

These effects, in turn, will do a lot for you, because:

- You do no longer spend your time on rework and implementing unwanted functionality. Instead, you can spend this time on producing more of what the customer actually appreciates. In other words: you create more “functional value” for the customer.

- Higher quality, combined with higher functional value, will enable you to negotiate a better price with the customer! Higher price, combined with better customer retention, simply means: more revenue. And this is about business value for your own enterprise.

- Reduction or elimination of rework and functional waste, also leads to cost reduction. Because we don’t put effort in doing the wrong things. And this implies reduction of operational costs. Combined with increase of revenue (see above), this leads to more profit for your enterprise. This is true business value.

- Note that you will reduce operational costs for a very basic reason also: inherent to this concept of “flow”, developers, reviewers, testers, and anyone else involved in the entire process, will spend less (or no) time anymore on searching out what to do, and on handling tasks. Any effort that remains, directly relates to value-adding work. There will be less “transaction” costs therefore (as “flow thinkers” say).

By the way: “flow thinkers” often denote the sequence (or network) of value-adding steps (activities) to reach a customer outcome, a “value stream”.

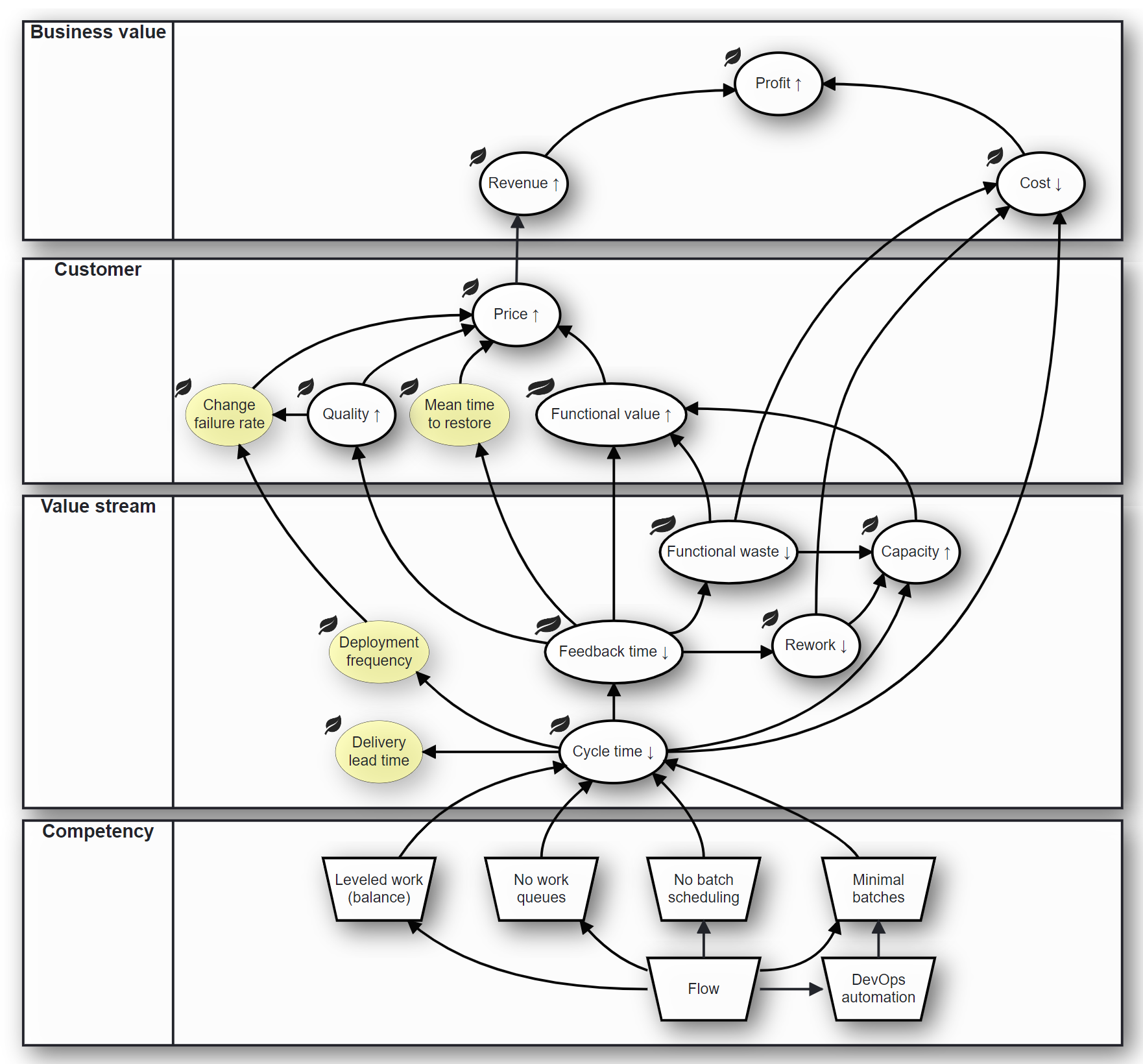

DORA & flow metrics

Transition to “flow” in DevOps will lead to reduction in the cycle time of DevOps. This is, per definition of DORA, reduction of Delivery Lead Time, or Lead Time of Changes. This is the time concerned with “flowing” a change from merge to deployment.

Decreasing batch sizes in DevOps, ultimately deploying in one-piece-flow, directly leads to reduction of the DORA metric of Deployment Frequency. Above we argued how cycle time reduction, and reduction of feedback time through that, leads to quality increase and rework decrease. Along the same lines it can be argued how it will lead to a reduction of DORA metrics Change Failure Rate and Mean Time To Restore (or Time To Restore Service).

From this you can directly see how the impact of transition to “flow”, can be measured based on DORA metrics. At least, in DevOps.

Now consider that you want to achieve “ultimate flow” (one-piece-flow), via step-wise improvements, or “continuous improvement”. In terms of the “water and rocks analogy”, as used above, you want to lower the water level in DevOps step by step. And you may define milestones per each step, setting objectives for DORA metrics per each milestone. The lower the cycle time is, the shorter the time between the milestones can be, and the more frequent these DORA metrics can be re-evaluated.

Consider now that you observe, at a certain milestone, that a certain DORA metric did not improve as expected. It will not take you long now to find the underlying problems that cause this lack of performance. Because, due to the short-cyclic nature of “flow DevOps”, there is only a very small set of changes that need to be sorted out. These are the changes that are still fresh in mind of the developers, reviewers, testers, etc., that worked on these changes. In other words: transition to “flow”, in DevOps, makes measurement and continuous improvement of DevOps itself, really effective!

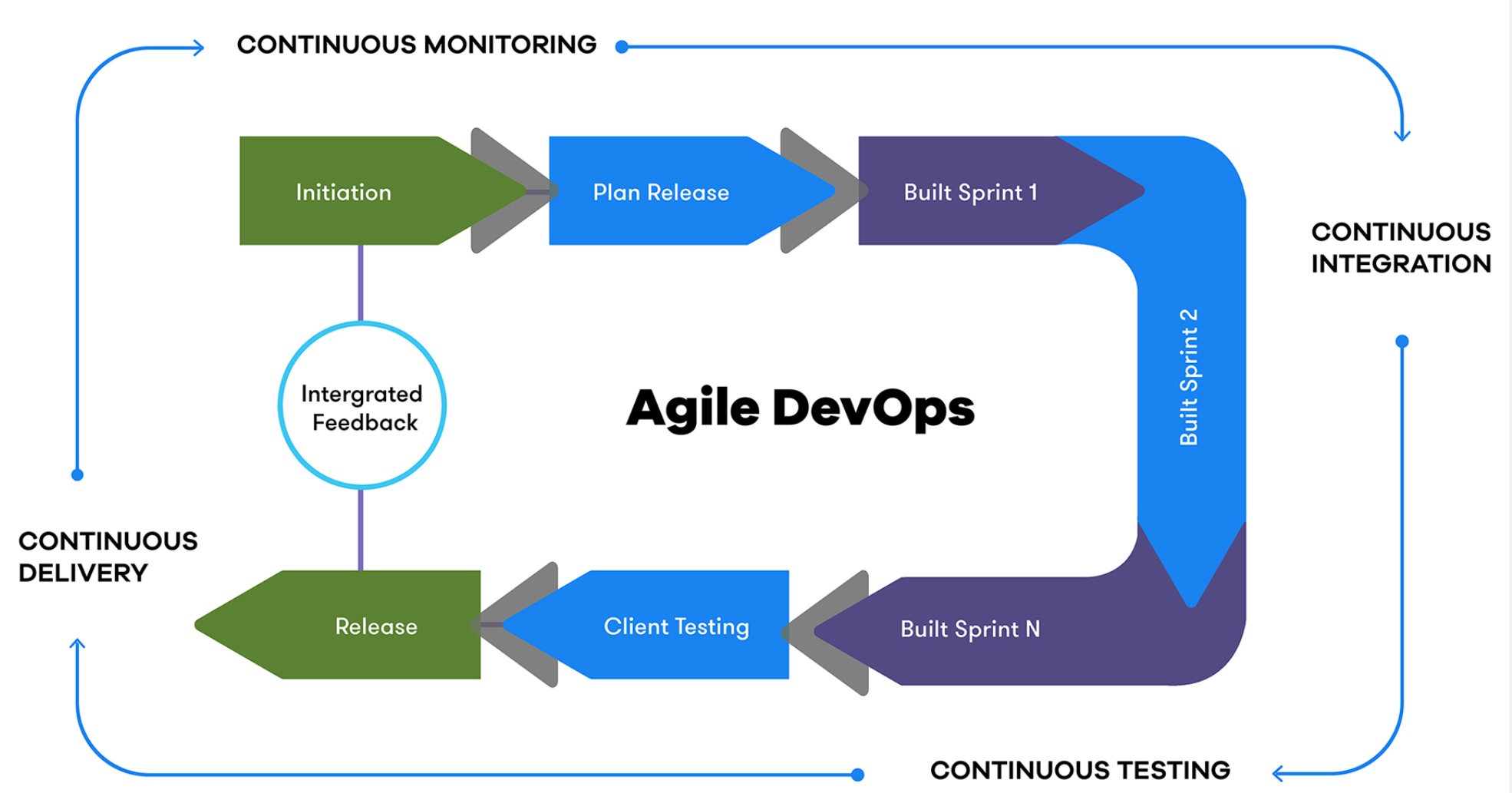

Value streams of Agile DevOps & beyond

As discussed above, measuring and management of “flow”, in DevOps, can use DORA metrics as a starting point for continuous improvement. DevOps pipelines are about the most “downstream” and closest-to-customer value stream. It makes good sense to working towards “flow” here first.

Once “flow” is achieved in DevOps, it is time to transition to “flow” further “upstream”. By considering the value stream of development (coding, review, etc.), cycle time is measured from the point where a developer starts implementing a task for a user story, or starts fixing a bug. When the value stream that is under control of the Product Owner (PO) is included, cycle time may start from the point where the PO releases a user story by making it visible in the sprint backlog. Or it may even start from the point where the PO starts creating a user story against an epic in the committed roadmap as released by the Product Manager (PM). Or from the point of committed roadmap release itself.

Ultimately the network of value streams, as continuously improved, is closed-loop. It starts from the customer, as the source of ideas and bugs, and ends at the customer, as the beneficiary of the value that is delivered.

A next blog in this series will propose a concrete method for continuous improvement of value delivery, through Agile DevOps and beyond. “Thinking in value delivery systems”, as discussed in the previous blog, and “thinking in flows”, as discussed here, form the pillars of that method.

Do you want to know more?

VDMbee introduces it’s Agile DevOps acceleration service. It helps organizations to improve their performance of software development. When you are keen to know more, please navigate to our Agile DevOps acceleration service page. On this page you can also request information.